Enterprise PLM architecture is a critical component of any manufacturing organization’s overall strategy, as it helps to align technology with business goals and objectives. However, all existing PLM platforms were created back in the days when “SQL database” was in the mainstream, and therefore all PLM implementations these days, except for some new cloud PLMs are monolithic. It is aligned with many other enterprise applications (mostly ERP, MES, and many other legacy software) architectures are monolithic. It is a tightly integrated and interdependent architecture and actually hinders an organization’s ability to adapt and evolve. This raises many questions these days as organizations are looking at how to digitally transform what they do. In this article, we will explore the reasons why monolithic enterprise architecture is bad, and discuss alternative approaches that can help organizations to be more agile and responsive to change.

PLM Monolithic History

In the past, the PLM industry, and especially product lifecycle management marketing was doing a great job associating monolithic architectures of product data management as the strongest element of the product value chain. A single source of truth was (and still is) a strong mantra in product lifecycle management mindset and sales process. It is presented as a foundation of any PLM solution and product development business processes. But things are changing, digital transformation is pushing customers to rethink their business processes in product data management, supply chain management, project management, and overall global manufacturers’ strategies.

This is where the digital thread begins. A digital thread is a term used to describe a holistic, end-to-end digital representation of an asset, product, or process throughout its entire lifecycle. It connects the data, information, and knowledge about an asset or product from its initial design and engineering, through its manufacturing, operation, maintenance, and eventual decommissioning. Additionally, digital thread allows the organization to have a single source of truth, which can be accessed by different departments, and teams. This allows for better collaboration and less siloed decision-making.

But this is where monolithic architecture hits each mature PLM system in the hardest way. 25-30 years old legacy PLM systems are not aligned with modern development, and microservices architecture and stopping companies from getting access to up-to-date information and real-time data.

Killing Monolithic Architecture Approach

Check my article from the last year – A Post Monolithic PLM world: Data and System Architecture. Many things in the publication were resonating with my view of digital transformation in PLM architecture and the coming new capabilities of cloud-native PLM platforms.

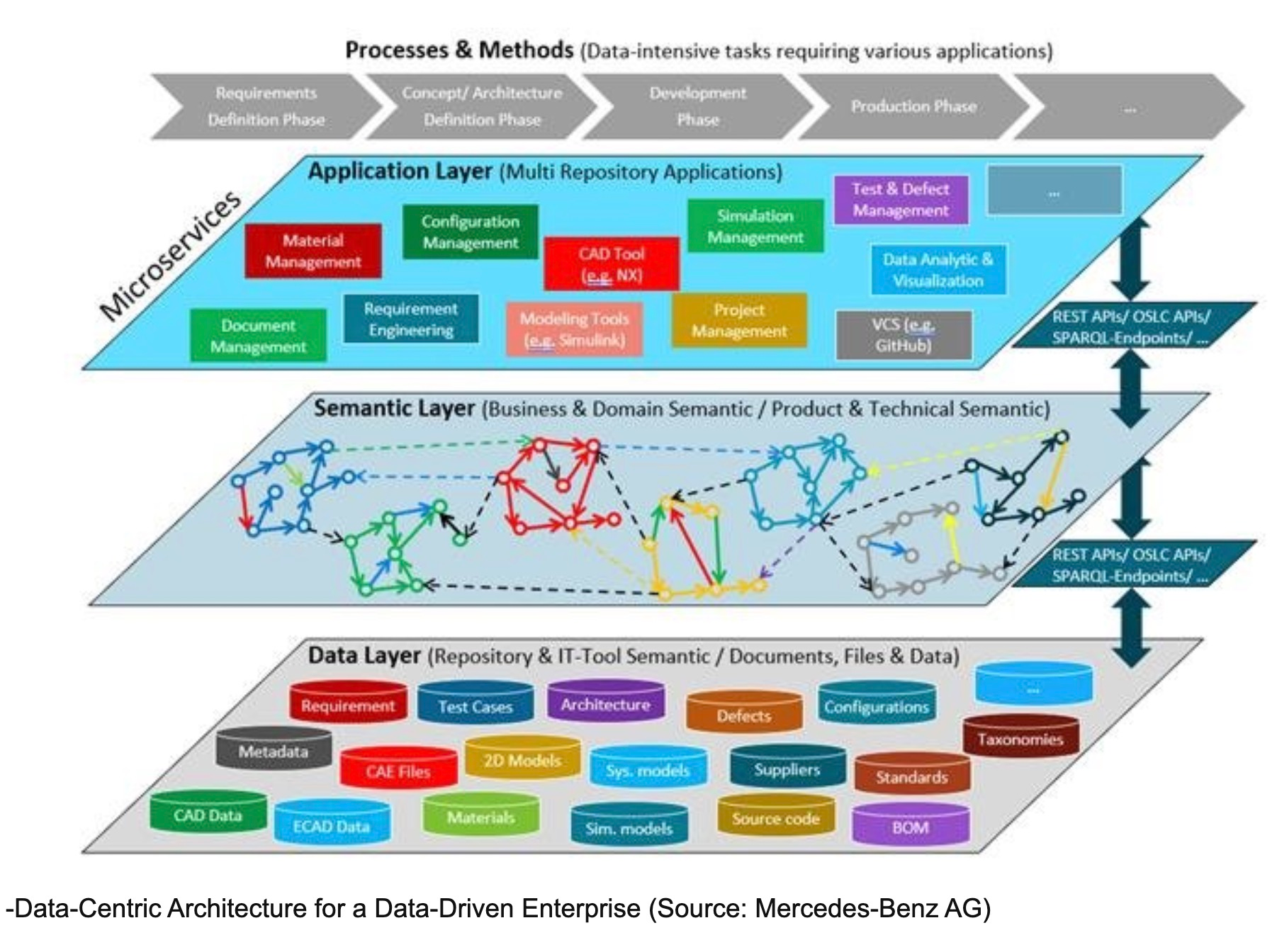

My attention was caught by the publication called Killing the PLM Monolith – the Emergence of cloud-native System Lifecycle Management (SysLM), which I found very insightful. The central points of the new approach are the following: Polyglot persistence, Microservices, Semantic technologies (Semantic web), Linked Data, System Lifecycle Management, and Cloud-native system approach. Here are a few passages and my favorite picture.

If you have no time to read the publication, here are 10 points you can use for TL,DR and they can give you an idea of where modern PLM architecture can go.

- Microservices are an architectural prerequisite for agile DevOps processes due to the fact that team autonomy can only be achieved if dependencies within a software’s architecture can be brought under control.

- Without the openness of the involved IT applications, digitalization cannot be scaled to an enterprise level. Most use cases currently discussed for AI, Data Analytics, and IOT will just not work if this openness isn’t ensured.

- MBSE needs access to engineering data and mature lifecycle, configuration, and data management capabilities. Configuration management is way more than just versioning and baselining.

- Microservices give freedom to choose the best technology – be it UI, persistence, or programming language – to solve the domain problem at hand in the best way.

- Decentralized Environments need semantic technologies to query not just one database, but a network of databases. Linked Data in contrast to the original Semantic Web is a pragmatic approach focusing on easy-to-understand and therefore easy-to-scale principles.

- A Microservice-based Architecture needs in addition to Linked Data technologies also asynchronous data exchange for example by using message brokers. Linked data and messaging brokers may serve as the necessary middleware for cloud-native SysLM .

- Model-Based Systems Engineering and the system model can serve as an interdisciplinary metamodel. It cannot achieve anything beyond a pilot project if it is not connected to existing engineering data that lives within distributed engineering tools.

- Systems Modeling itself will eventually also move to a cloud infrastructure, making it necessary to support the authoring of a system model within the web client.

- Data Protection and Privacy laws will require the establishment of local data centers as moving sensitive (and personal) data between jurisdictions will be inhibited. For example between the US and the EU.

- The open API philosophy will be strengthened in the years to come also by legislation and precedent cases. Closing APIs to prevent competition can already be seen as a distortion of competition. Legislation is slowly but effectively moving in a direction where openness and interoperability are more important and of higher economic and social value than copyright protection of API terminology.

I found these 10 points very well articulating the strategy.

5 Steps to create a strategy to break PLM monolithic

While the discussions about how to break monolithic PLM architectures are not surprising these days, I found that most companies are struggling with the implementations. On the side of industrials, a programmatic strategy and specific technology roadmap is missing. On the side of PLM vendors, I can see how leading PLM vendors are continuously bringing their legacy monolithic architectures as solutions for digital thread implementations.

It made me think about 5 steps an industrial company can make to break monolithic PLM architecture implementations.

- Identify data sources. This is a very important step, which will help you to find all sources of information, what systems they are using, and to understand the depth of the technological barrier

- Find a platform for the digital thread. It can be one of the existing products (including PLM), but you should keep in mind that the platform must be scalable to support multiple use cases and become a solid place an organization can use to manage an entire set of information relationships starting from computer-aided design, requirements management, digital twins and product lifecycle management.

- Implement data source web services. It is an important step to understand “how to get data”. It is needed to understand how data will become available.

- Test the solution on a small scale and demonstrate the value of using the new system to align all processes across multiple teams, systems, and companies.

- Scale up. Sounds simple, but this is the stage when all the data is plugged in and becomes available to everyone.

Conclusion:

I don’t see many alternatives to break existing PLM architectures. But it will be a complex process for every company that will require the delivery of new technological platforms, aligned with current PLM legacy platforms as a system of engagement and intelligence to absorb and connect the data from existing applications and people.

At the same time, using the existing old PLM solution as a foundation of digital thread can be a challenge, especially in a long run. Bringing PLM tech might be a good conceptual approach to help companies to understand the idea and work on the strategy, but bringing an entire linked data set and knowledge graph to be managed by existing PLM systems is probably not the best approach. These systems are not fit the role of a sustainable digital thread platform.

New systems and architecture will have to come to deal with these problems. Just my thoughts…

Best, Oleg

Disclaimer: I’m co-founder and CEO of OpenBOM developing a digital cloud-native PDM & PLM platform that manages product data and connects manufacturers, construction companies, and their supply chain networks. My opinion can be unintentionally biased.